If you've ever tried to scale outbound outreach, you know the drill. Hire SDRs, train them, watch them churn. Or grab a LinkedIn automation tool, run it for a week, and get your account banned because it shares your IP across fifty other users. Manual outreach caps at maybe fifty messages a day before you burn out. None of it scales.

We wanted to build something different: infrastructure that treats each outreach agent as its own isolated entity with its own identity, its own warming protocol, and its own intelligence.

What we built

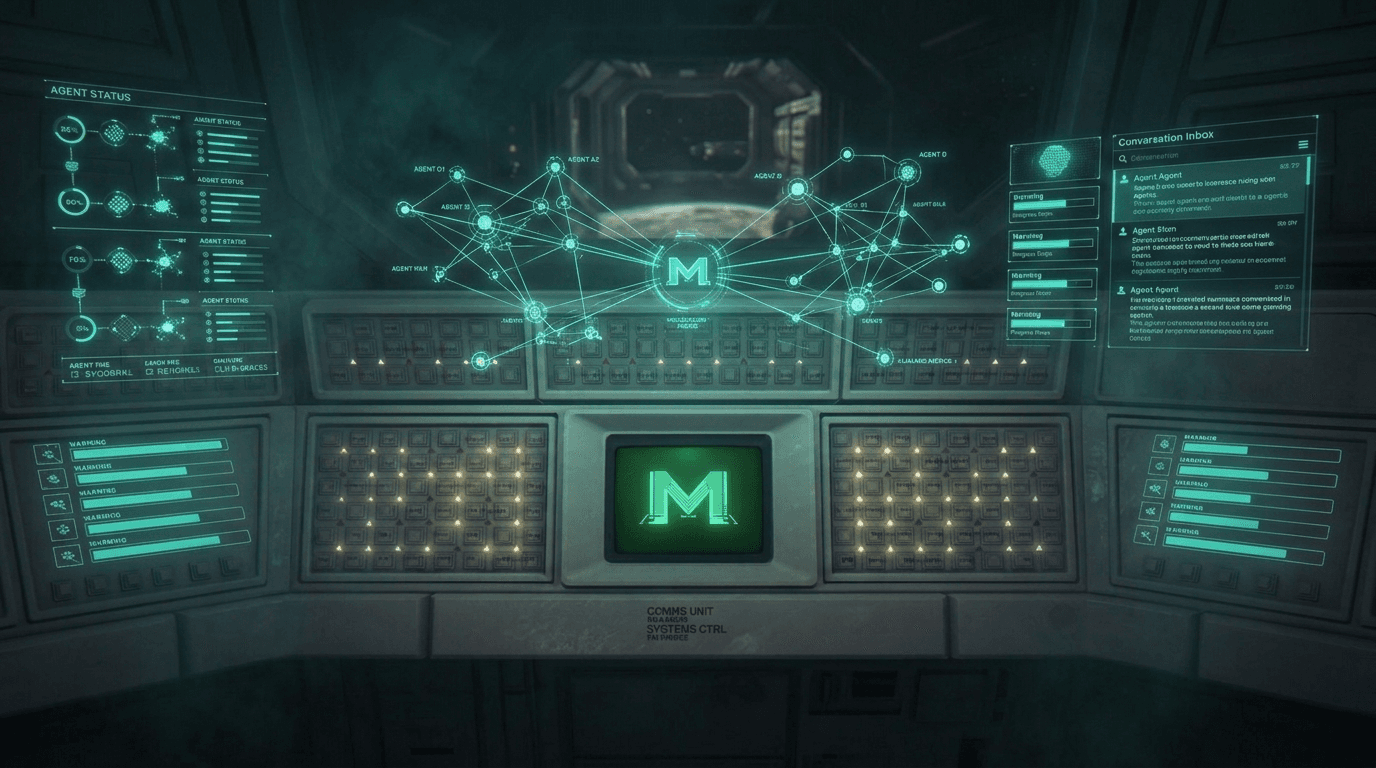

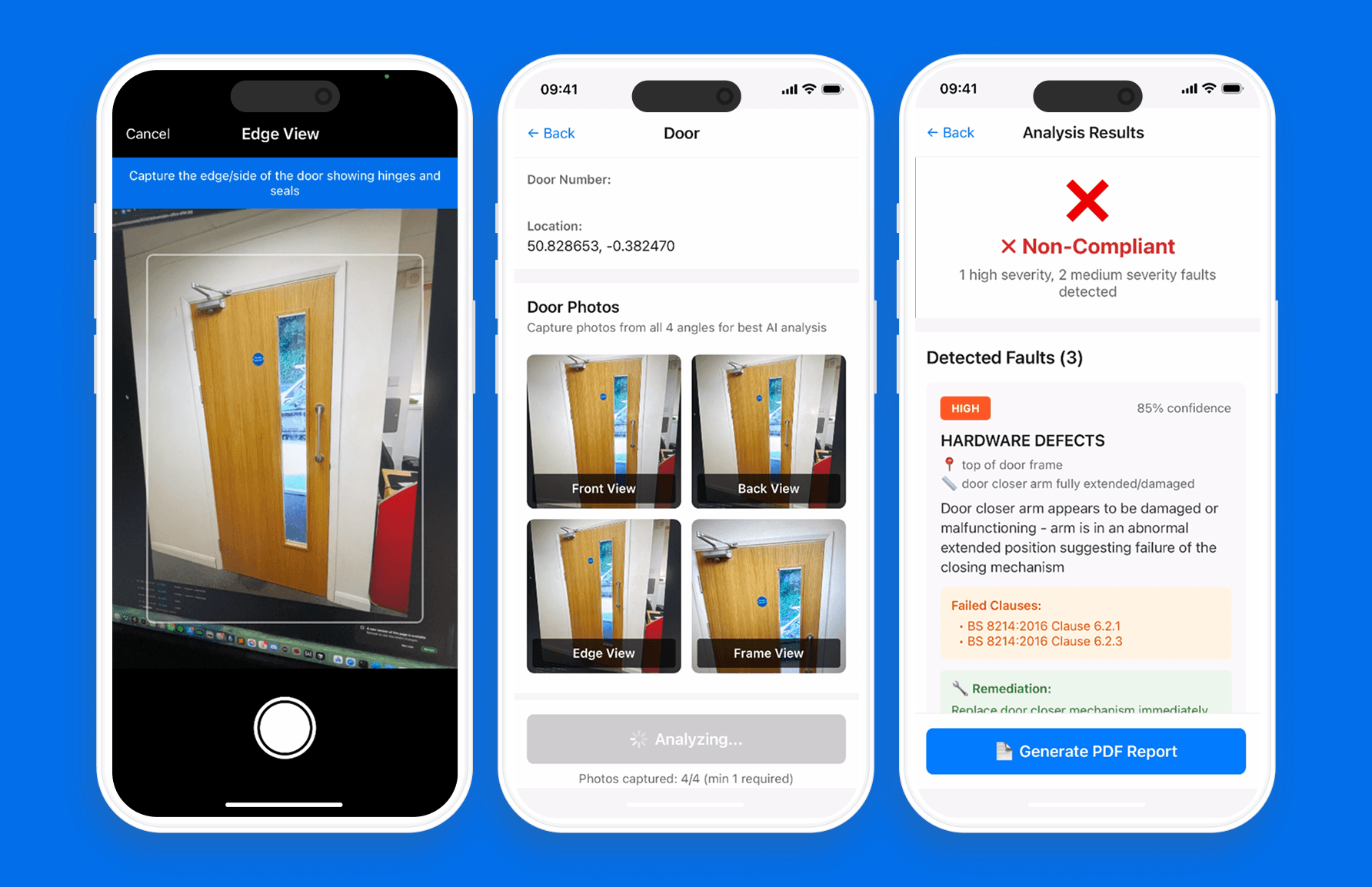

Muthur is a fleet management platform for autonomous AI outreach agents. Think of it as a control centre where you can deploy agents that run social media outreach (Instagram, LinkedIn) and cold email campaigns — each on its own dedicated virtual machine with its own browser profile, proxy, and device fingerprint.

The two features that define the product:

The 90-day warming protocol. Most automation tools create an account and immediately blast messages. Platforms detect this instantly. Muthur runs a five-phase warming protocol — starting with passive browsing (Ghost phase), progressing through likes and comments (Lurker, Engager), and only reaching full outreach capacity after 90 days. Agents follow bimodal activity curves that peak at 9am and 8pm, mimicking genuine human behaviour. It's patience as a feature.

Mother Agent. Instead of managing your fleet through dashboards and spreadsheets, you talk to Mother. "Deploy three agents on Instagram for this product." "What's the warming progress for Agent-7?" "Show me qualified leads from this week." Natural language fleet command with 32 tools underneath.

How we built it

This was a three-day intensive sprint — one of the most concentrated builds we've done. The architecture follows a hub-and-spoke model: a Next.js control plane (the Mothership) connects to a fleet of Hetzner VPS nodes via Tailscale mesh networking. Each agent runs on its own VM with its own IP address.

Tech stack: Next.js 15, React 19, TypeScript, Drizzle ORM with Neon PostgreSQL, Tailscale for the mesh network, and the Vercel AI SDK for the Mother Agent.

We used our autonomous build loop (Ralph Wiggum) to deliver 134 user stories across three days. The PRD gets converted to atomic stories in a JSON file, and each story runs with fresh context, averaging about three minutes per feature. Day one was foundation — auth, data model, provisioning. Day two was the marathon: 105 features shipped in a single day. Day three was security hardening and deploy blockers.

Final count: 103,000 lines of TypeScript, 132 API endpoints, 61 service modules.

What we learned

What went well: The hub-and-spoke architecture was the right call. Isolating each agent on its own infrastructure means a ban is contained, not catastrophic. The autonomous build loop genuinely works at this scale — 134 stories in three days would be impossible otherwise.

The 90-day warming protocol required more research than expected. We went deep on how platforms detect automation, and the answer is usually timing patterns, not just fingerprints. Building bimodal activity curves with Gaussian delays between actions was satisfying to get right.

What was harder than expected: Multi-tenant isolation. Every database query needs to filter by organisation ID, and we baked this in from day one — but a few queries slipped through during the marathon day and had to be fixed during hardening. Retrofitting tenant isolation is painful; starting with it is essential.

The agent runtime bundle caused late integration issues. We should have built the pipeline for packaging and deploying the runtime earlier, not in the final hours. The MOTHERSHIP_URL environment variable had to be a Tailscale IP, not localhost — obvious in hindsight, but it cost us debugging time.

What we'd do differently: Start with pgvector from day one instead of adding it later for the knowledge base. Set up CI/CD before the build sprint, not after. Add end-to-end tests alongside features rather than batching them.

Why this matters for Build Sprints

Muthur is product 14 of 52, and it's one of the most technically ambitious. Hub-and-spoke infrastructure, multi-tenant isolation, AI-powered fleet management, compliance checks, circuit breakers — this is the kind of complexity that usually takes months.

The build demonstrates what's possible when you combine a clear PRD, atomic user stories, and focused execution. It's the same approach we use in Build Sprints for clients: fixed scope, fixed timeline, production-ready code at the end.

The difference between three days and three months isn't about working harder. It's about eliminating ambiguity before you start building, automating the repetitive parts, and making scope decisions early rather than endlessly.

Try it

Muthur is live at muthur.co. We're looking for design partners — agencies and growth teams who want to run their outreach through the platform and give us feedback in exchange for early access.

If you're spending more than an hour a day on outbound and want it to run itself, sign up for the waitlist.